Now back to Fugitive Indicators and Targets! I was reviewing a National Level Results Framework and I bumped into an Indicator that read “Number of gender-responsive measures in place for equitable access and benefit in sharing of natural resources and biodiversity.” I immediately though about Thembi! M&E practitioners may find several problems with how the Indicators is structured (google CLEAR, SMART Indicators etc) but what I’m interested in is the fugitiveness of the indicator and subsequent targets. Remember this is a national level indicator where several government and none government actors are contributing including private sector. All the actors will enact “measures” independent of each other and it’s not known how many measures will be enacted. This is where the ‘fugitiveness’ comes from. So if we set 10 Measures as our target. We might under or over perform on the indicator not because we are genius or dump but by a stroke of luck or fate! In every intervention we should have a measure of control over our intentions if we don’t then our targets are fugitive (elusive in some sense because they keep moving. It’s like we are chasing the wind!). In most programs/interventions resources often place a measure of control. It’s like we cannot achieve more than our resources can buy

|

A couple of years ago I was facilitating a Managing for Results Workshop and I mentioned a phrase that my good friend Thembi liked so much that she has literally named me after it! The phrase was “Fugitive Indicators”. For you to grasp the concept quickly I will first refer to “Fugitive targets”. First, I will explain how Monitoring and Evaluation (M&E) works. M&E is the discipline (art or science) of measuring commitments of good intentions to see whether they are being achieved. To do so you need the commitment i.e. a “Result statement” e.g. Goal: To Reduce Poverty in Zimbabwe by 2030 and then a measure for the result statement e.g. “Proportion of Zimbabweans Living below the Poverty Datum Line”. Now to convince yourself that you have done reduced poverty you need to know how many people are poor before you intervene (that called a baseline/benchmark etc) and also how many you can pull out of poverty (that’s your “Target”). Now lets say for illustration purpose you are “targeting” to reduce the number of people living below the poverty datum line from the current 30% to 20%, the role of the M&E practitioner is to measure that commitment.

Now back to Fugitive Indicators and Targets! I was reviewing a National Level Results Framework and I bumped into an Indicator that read “Number of gender-responsive measures in place for equitable access and benefit in sharing of natural resources and biodiversity.” I immediately though about Thembi! M&E practitioners may find several problems with how the Indicators is structured (google CLEAR, SMART Indicators etc) but what I’m interested in is the fugitiveness of the indicator and subsequent targets. Remember this is a national level indicator where several government and none government actors are contributing including private sector. All the actors will enact “measures” independent of each other and it’s not known how many measures will be enacted. This is where the ‘fugitiveness’ comes from. So if we set 10 Measures as our target. We might under or over perform on the indicator not because we are genius or dump but by a stroke of luck or fate! In every intervention we should have a measure of control over our intentions if we don’t then our targets are fugitive (elusive in some sense because they keep moving. It’s like we are chasing the wind!). In most programs/interventions resources often place a measure of control. It’s like we cannot achieve more than our resources can buy

1 Comment

This, in no way, is a conventional ‘book review ‘. It is more of my interaction with the book as I read it. I had no intention of doing a review but colleagues seemed to ask for my take on the book- so here we go!

It’s not a practice guide or something like that, for Evaluators but, a collection of scholarly articles on the state of Evaluation in Africa. These paper were written from an analysis of Evaluation papers, articles etc found in a AfrED database created by CLEAR-AA and CREST as well as survey of evaluators and authors in the region. The work is of an unprecedented empirical depth and detail on the status of Evaluation in the region That it’s based upon a study of evaluations, journal articles and conference papers found in a database poses some generalisation issues in that, the data relied upon is from on 22% of Africa (Largely Anglophone - although I note the inclusion of some Francophone countries in Chapter 3). Secondly it’s based on Open Source Reports- an even larger amount is not shared openly. The authors acknowledge this limitation. However this is not to discredit the book. To the contrary this is a marvelous piece of work that has gone where no other has. It is a great start. The Editors were careful to set a time period (2005-2015) which they are looking at. In a fast changing world of M&E you wouldn’t want the book to seem to be static in time. They seem to acknowledge that tomorrow things may be different. I hope there are plans to have several editions over time and this could become the State of Evaluation in Africa Report The book champions the Made in Africa narrative. I am however uncomfortable with how this is presented. It may lead to a “they and us” interpretation which sounds as if adopting western developed methods is a second colonization of Africa. There is no straight answer to the question: Is Africa difference or are we making Africa different? Furthermore for me Africa is not a homogeneous ontological, axiological and epistemological entity. It’s like the proverbial tactile elephant. Rather I think we need a fusion of western and indigenous knowledge systems. This way we will not fall in Mugaberism ‘keep your west to yourselves and we keep our Africa to ourselves’. I dream of a day when evaluation theory and methods developed in Africa will be applied in Europe or the United States this can only be possible if we are inclusive. In Made in Africa our quest is not for superiority but applicability and comparability. The current stunted growth and confusion in Evaluation as a discipline can be traced to identity rivalry between North American and European evaluators. We should avoid the same mistakes. One fundamental question we should be asking in the Made in Africa narrative is “What do we want to change and why?” The authors are on point however in their analysis of what they term “collaborations” between Western and local evaluators. Evaluation is still stuck in the ‘70s development approaches. Donors are slow to recognize local capacity. The book could well have drawn a causal line between their finding that evaluations in Africa are being conducted by Westerners (although this is contradicted in chapter 6) and the Eurocentric nature of the methods. A Shona proverb says “mbudzi kudya mufenje hufanan’ina”. Chapter 6 study found no significant relationship between country of origin and choice of evaluation method. Not sure if there’s any between country where one obtained evaluation qualifications and choice of methods Chapter 4 analyzes evaluation reporting standards using Scandinavian donors as a case. The chapter is a must read for evaluators and commissioners I evaluation. It present good evidence upon which a discussion on standards can be based. The analysis should be extended to western funding agencies like USAID, DFID and CIDA. The chapter illustrates how evaluation reporting is not standard and aligned to international reporting standards. It notes with concern how ethics are neglected in Evaluation. However beyond research convenience, it doesn’t explore how these affects the development trajectory and use of evaluation reports The book explores in sufficient detail the major narratives in African Evaluations including:

The book leans heavily towards public sector experiences/conceptual frameworks (whether this was consciously or not I’m not sure). In countries like Zimbabwe where very little donor funds are channeled through government the landscape may be different Findings on who is carrying out evaluations though not surprising makes sad reading for southerners. The book notes that most evaluations (86%) are carried out by Notherners. The book also notes that the majority of Evaluations are of a poor quality. Does this then suggest that quality and capacity issues are not only African problems? The book makes a bold and sweeping statement that African evaluations “serve more of an output monitoring function than a platform for strategic decision-making.” I struggle to convince myself that by merely looking at evaluation reports without looking at what happens thereafter is enough. Such a conclusion could best be made after reviewing Management Responses and Evaluation Results Use processes and products A nagging question that kept popping at the back of my mind whenever the book mentions “commissioners of Evaluation “ was where are project/program implementers? Oftentimes implementing partners like SNV, World Vision etc commission evaluations on projects they implement that are funded by donors like Sida. So when the book talks of Evaluating Agency are they looking at the funder and subsume the implementer? This has a practical implications since Capacity, policies and procedures differ between and across agencies I don’t find the book‘s conclusion that most evaluations are for management purposes surprising. One explanation can be found in the emergence of the ‘implementing at scale’ approach. In this approach implementing agencies first search for what works through ‘pilot projects’. These are subjected to Impact studies and once a positive causal relationship is identified implementation is scaled up. During scaling subsequent evaluations are confirming targets set etc. I am not sure it’s also necessary to see the management- governance continuum as a development hierarchy otherwise that would contradict utility theory While the book is in no way a methods guide book, Scholars and practitioners may find the Methods section of each paper/chapter interesting. A number of methods are described as they are used. Methods like content analysis, appreciative enquiry, Delphi-technique etc. Chapters 5 and 6 dwells in detail on Evaluation methods (quantitative, qualitative and mixed methods). The authors could have shed light on the compatibility or lack thereof of ‘approaches ‘ vs ‘methods ‘ On Evaluation characterization, I particularly agree with the dichotomization of evaluation into Formative & Summative. This simplification is important for standardization. It’s a temporal import upon which you can superimpose other facets of Evaluation including Result Level (I.e. Output, Outcome or Impact) as well as it’s a result or process evaluation. I will post a graphic presentation of my reasoning elsewhere in this blog Chapter 3 confronts the Gender & Equity Question in evaluation. It’s often tempting to fault evaluators for not including G&E in their evaluations without realizing that evaluators are often at the end of the a chain of poor designs. Project designers had no G&E lenses, implementers too. When an evaluators (non formative) comes they cannot impose a new design that will be tantamount to squaring a circle. Evaluators are victims of circumstance here. Whatever happened to evaluability assessment? Without falling into the grammar/spellcheck pitfall I will just make a small observation. A few chapters were not properly proofread. Presentation of findings sometimes were hard to follow e.g. chapter 6, the one and only graph did little justice to the reach data on the Quality Framework sections. Individual or a composite graph would have been better. I would recommend African evaluation scholars and practitioners to have a look at the book. It is definitely at the forefront of the most current evaluation narratives in Africa and beyond! Two major ills bedeviling M&E are corruption and pretense. Those willing to corrupt and be corrupted are too numerous to count in the development sector. At the same time M&E is like intimacy where the most celibate cardinal can pontificate about it - everyone knows something about M&E though no one knows everything about it. It seems these days everyone is an M&E consultant. I’m not calling for the regulation of the noble profession but there is need to empower clients to identify bona fide experts and weed out pretenders. A bad job has the ultimate effect of engendering a bad reputation and consequently limiting the growth and advancement of the profession. A colleague once remarked that he had hardly seen an evaluation that was not contested. He pointed that there’s always something to criticize in an evaluation. It could be the comprehension of the scope of the assignment, the methods of data collection, the depth of analysis, the interpretation of results or even the presentation. Evaluation Associations, academic institutions and even clients has a role to play in building the capacity of evaluators. I say even clients - SNV a Dutch development organization had a capacity building program for their consultants (Local Capacity Builders they called them). At first I thought it an oxymoron but I appreciated the thinking behind it - everyone learns. More and more spaces for learning and sharing should be built. M&E is a fast evolving discipline those who have been long in the game may need to update themselves and the young and emerging evaluators need mentoring Corruption is a cancer. Recently I put in a bid for an end of project evaluation for a faith-based Organization. To my surprise I was told I had not included a financial proposal among the documents I submitted. I rechecked my sent box. Sure thing, the Financial proposal was there! Well to cut a long story short I was not even shortlisted they had to safeguard the preferred! A couple of years ago I won a bid to do an evaluation for a big international NGO. As we were preparing to leave for field work the officer responsible approached me and said “We have not received our dues “. I was taken aback but soon realized it was the 10% gang. The network wove right to the top. My guess is as good as yours - the report took a lot of time and defending to be accepted if at all it was! A boss once pressured our M&E Unit to award a US$150k Impact Evaluation to “a reputable consultant” against our better judgement. It was not long before we discovered an intimate relationship between the boss and the consultant. We lived to regret the consultants’s expertise. I could go on and on recounting these horror stories but many have had these experiences. Development agencies pay lip service to the fight against corruption. It’s not only in M&E that corruption is endemic it’s almost in all spheres of development from procurement to program implementation. One thing to remember is a corrupt deal leads to poor service as the corrupter has a feeling of entitlement and the corrupted is insecure suffers impaired judgment it. I was not brought up in a world of freebies. I don’t remember angel investors or good Samaritans knocking at our door. We ate what we hunted. Effort was the key. That does not discount miracles. For me miracles lie in the preter-natural that remains after the deed. I don’t strive to be rich but to be comfortable. If riches achieve comfort, then they are most welcome. This is why I can’t comprehend the get-rich-quick generation and the mirage of pons schemes and its cousin the so-called network marketing. Why would you want a free car, house etc? Oh for goodness’ sake work for it. Yes true, the latest models make you work but don’t forget that in a normal system the entrepreneur and the enterprise work too. There is no food for lazy man. It’s called (by the Bible) a 'just recompense of reward'. The concept of a 'just reward' is key. Effort=Reward. Anything outside of this is a zero-sum game. It leads to Hardin's tragedy of the commons. All these are a manifestation of a cruel, greedy world. No regard for the other. No zeal for Pareto's optimality but humane equilibrium and distribution. People wonder when the gap between the rich and poor is exposed. Recently an INGO dared to tell the Davos Club in their sub-zero hideout that things were getting worse. No one came to their aid no not even the 4th estate who seemed to pander to the prawns feasting table of the Club. No those guys are not bad. They are smart and some of them hard working. And predestination and foreordination made them so too. But there are others who glory in dehumanizing the unfortunate and get richer at the poor's expense. I see educated people neglect their professions to wander into the get-rich gravy train. Sometimes it works but in unstable economies like ours the consequences are humbling. Remember the DD who tried hustling and he still is a hustle. No I’m not gloating or demeaning him but I’m learning. Its folly not to learn from others' mistakes. You don’t have to make the mistake yourself to learn. You might never. Remember the Gobos who blew his brains out trying to see if a revolver kills. I guess he got the answer. So work guys. Make some effort. Be found doing and you will surely get a just recompense of reward.

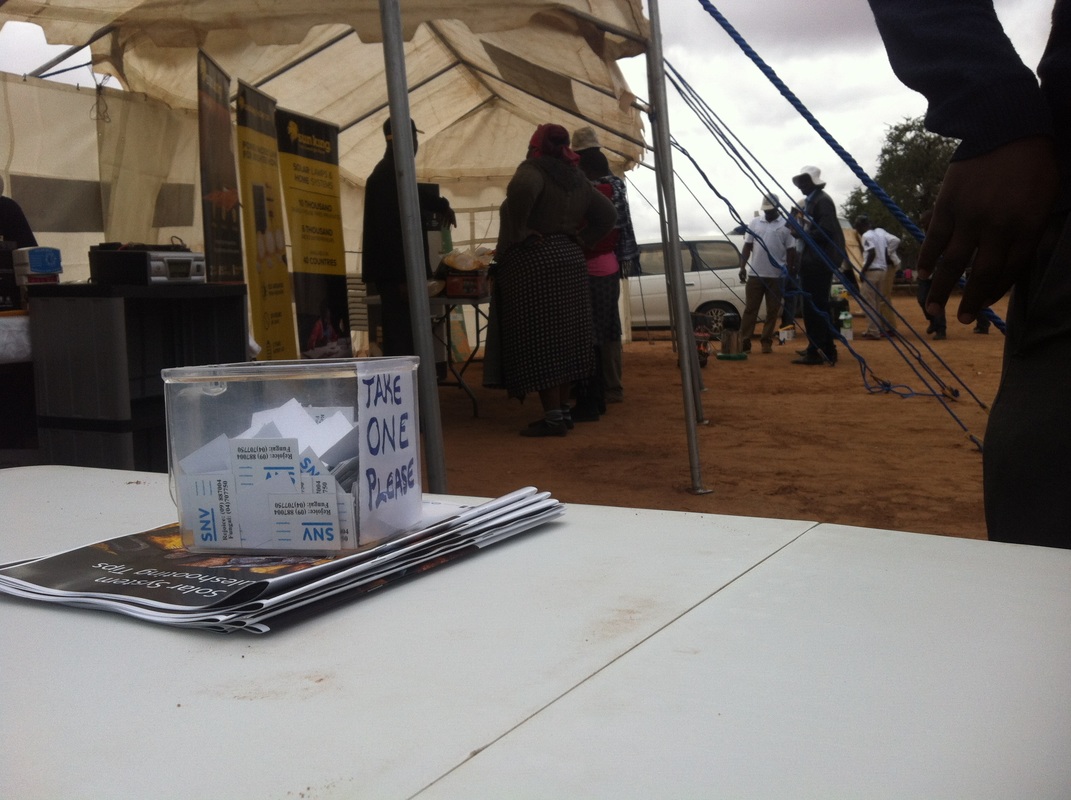

How to Estimate Attendance

Some performance measures at the output result level require us to estimate "the number of people attending" an activity. Training is one of them, but counting people at a training workshop is pretty easy because the numbers are generally small and the activity is pretty much organized. But how about estimating the number of people attending a Food Fair, Road Show or such open access, open air activity? The challenge is around validity and accuracy. There are several ways to count attendance in this scenario but I will describe one method I often use. Let’s imagine it’s a Solar Fair. A solar fair is an exhibition event where solar entrepreneurs, consumers, regulators, and other stakeholders meet for information exchange, technology promotion and exchange. It may or may not include the actual sale of the solar products to end-users. You want to count the number of people attending this fair in a non-intrusive way. You could ask people to fill-in an attendance registers but this could be too intrusive someone might ask "why do you want my personal details when I’m here just to see what is happening" sometimes it might not even be possible because of literacy problems. Imagine you are conducting the fair in a remote rural location where your intervention logic tells you solar products are most needed because there is no grid electricity but the community has an equally low access to education and they cannot write or read! Of course you can ask and write their names on your own. But imagine 5000 people attending. You will come out with a sore thumb and numb hand! So what is the alternative? The following steps might help

As the value chain approach gain currency in economic development project across Africa, the burden and cost of monitoring and evaluation is growing. Traditional monitoring and evaluation approaches are tedious and costly when applied in a value chain development focused project mainly because a value chain is long and measuring every facet of the value chain would require large resource investments yet producing very little valuable information which many donors and implementing organizations would not afford. This paper presents an approach that would allow for reducing cost and yet be able to elicit information about the status of the value chain allowing project managers and stakeholders to make important decisions without overrunning budgets. The approach involves identifying the ‘vital signs’ of each value chain and concentrating effort on those vital signs which are indicators of the Value Chain’s health. It is based on systems concepts and tracks stressor-based indicators. It based on the analysis of the following characteristics: Hierarchy of Scale, Value chain pillars and Dynamism. In the Vital Signs Approach, monitoring is only done on nodes that represent the overall health of the entire value chain. The Vital sings approach will allow project stakeholders and managers to appreciate progress on the value chain without necessarily measuring everything as propounded in the traditional approaches. This paper will help value chain development practitioners develop cost effective monitoring and evaluation systems whether they are resuscitative collapsed value chains or developing new ones.

Vital Signs Approach to Value Chain Monitoring This approach presents an opportunity for identifying indicators of Value Chain health. It is based on systems concepts and tracks stressor-based indicators. Systems analysis is based on the analysis of a number of characteristics:

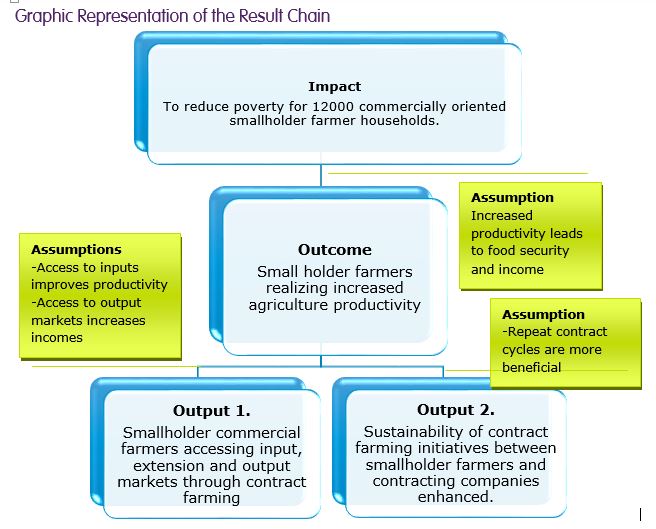

In the Vital Signs Approach, monitoring is only done on nodes that represent the overall health of the entire value chain. This node is as important as the cardiac system, respiratory system or the nervous system is to a human body. The Vital sings approach will allow project stakeholders and managers to appreciate progress on the value chain without necessarily measuring everything as propounded in the traditional approach earlier. The million dollar question then is: Which are the VC Vital Signs? There are two ways to answer this question: The first is when we are facilitating the revival of a value chain and the second is when we are developing a new value chain. In the first instance, in most cases, there are some and not all VC nodes not working properly and in the second scenario none of the nodes exist. Identifying Vital Signs for the second scenario is somehow simpler than the former. However, this paper contends that the Vital Signs are domicile at the Production Node. In Agriculture value chains this is represented by increased production at the farmer level. Thus increased productivity is a sign of efficiency of the input and out put level. In actual sense you can ask the farmer the availability of inputs over a number of seasons to get an appreciation of the efficiency of the input market and the same can be don for the output markets. Summary If smallholder farmers are organised into functional, win-win contract farming arrangements where they are exposed to good agricultural practices, appropriate technology and entrepreneurial skills and market prices, they will realize increased productivity and incomes. Problem Statement Edible oil and oil crops are among the widely traded commodities in the world. These crops constitute an important mainstay of the rural economy in Zimbabwe. The sector plays a significant role not only for the rural economy, but also for the national economy at large as it represents the largest cash crops grown by marginalized small holder households in all the agro ecological zones of the country. In Zimbabwe oil crops like cotton, groundnut and soya bean are the second major crops grown with an estimated total cropped area of more than 540,000 hectares involving more than one million smallholder farmers. Soya beans and cotton, like tobacco are key export or cash field crops. The country’s climate is suited to the growing of a variety of oil crops which include cotton, soya bean, groundnut, sunflower and sesame. Most agricultural produce is processed locally as Zimbabwe has well established industrial processing capacity whose utilization has dropped due to raw material shortage. Production is characterized by labor intensive, low-input and rain-fed cultivation that results in low yield. For most oil crops, except for soya beans, yields are below one ton/ha. Total annual oilseeds production is estimated to be only 30% of the annual consumption of +550,000 tons. The Fast Track Land Reform Program resulted in the disruption of 70% of all non-communal agricultural land and extreme economic decline led to 58% decrease in agricultural production from 2000 to 2010. The economic decline that led to hyperinflation dissipated liquidity, creating a situation where credit is mostly non-existent or too expensive for agricultural production. Without demand for inputs (due to lack of credit), the input supply chain disappeared (although it is re-gaining strength, partly due to NGO support) and a lack of financing also prevented investment in crucial infrastructure (e.g., Irrigation systems). All these factors contributed to low production and productivity. Low productivity levels result in small grossed production volumes and insufficient supply of raw materials for the manufacturing sector. The above are in caused by inadequate access to and utilisation of inputs, insufficient credit facilities and a general lack of organization of small holder farmers. The capacity utilization for the soya bean manufacturing sector will take 340,000MT grain volume against a projected harvest of below 70,000MT achieved in 2014. Ground nuts manufacturing capacity is 6,000MT. Processors run out of local material supply by September- October every year. Sesame manufacturing requirements are estimated at 1000MT against a supply base of an estimated 1000MT. As a result, the manufacturing industry are scaling down or closing. Those still operating rely heavily on imports to complement demand. Closing of factories results in loss of jobs, contributing to increased poverty levels. The gap between demand and supply resulted in SNV coming in with an intervention. Intervention Logic The edible oilseeds value chain development requires both functional as well as institutional approaches. There is high potential for improvement; for most oil crops productivity per ha can be doubled through the use of improved farm practices at smallholder level. The existence of large areas of uncultivated and fertile lands offers good opportunities for organic and sustainable oilseeds production. Demand for oilseeds is not a problem since opportunities for oilseeds export are not fully exploited yet because of inefficient marketing, improper cleaning and sometimes poor contract discipline. Nor have domestic demands been sufficiently met. Increasing interest in and attention to the oilseeds value chain through commercialization of small holder agriculture and facilitating the establishment and development of alliances of oilseeds actors and enterprises for advocating reforms, tackling policy and market constraints will be the key to trigger positive change. SNV through the RARP-CSF will contribute towards the creation, reinstatement and growth of sustainable commercial input and output marketing channels and services to reach 6,000 oil seed smallholder farming households. This will contribute to food security and improved incomes that will enhance the lives of smallholder farmers and contribute to the revival of the economy of Zimbabwe. For this to be achieved there will be need to promote market driven smallholder production of Oil seed crops through tweaking vibrancy of the farmer-market interface forging of partnerships between growers and other important value chain players. The intervention will be guided by value chain studies carried out by SNV in 2012 on groundnuts and soya bean. The strategy will focus on three main crops sesame, soya bean and groundnuts. Primarily, soya bean and groundnut farmers will get inputs through a contract farming scheme while sesame small holder farmers get inputs through cash sales. Provision of extension and training will be through group trainings, field days, commodity fairs and demonstration plots. What is the SNV’s Intervention Role SNV in collaboration with local capacity builders, private sector partners and government departments will undertake capacity building initiatives for the various oils seeds value chain actors including farmers, farmer association, private sector and public extensions services. Specifically SNV will:

Risks

Today, together with my M&E colleague Thando Nkomo we did something totally crazy! Our country director asked us to review our M&E practice and make a presentation to the whole SNV team. Instead of a power point presentation we chose to enact a court trial. We took one project manager by surprise by making them the accused and having her defend the M&E practice in her project. We selected our HR Officer to be the Judge, my colleague acted the prosecutor and myself as the defence attorney and the rest of the staff members became the jury. The prosecutor indicted the project for not having a rigorous M&E system right from having no good M&E framework, Poor resource planning, insufficient data collection, verification, storage and analysis to poor reporting. The manager had to defend everything even producing evidence of up-to-date databases, reports sent to donors and an evaluation plan and resources. The whole process took about 45 minutes and at the end of it she was exhausted and was found guilty! After, the trial several staff members came confessing that they now understand M&E better and they appreciated the method used as opposed to if we had done a power point presentation!

|

|

||||||

RSS Feed

RSS Feed